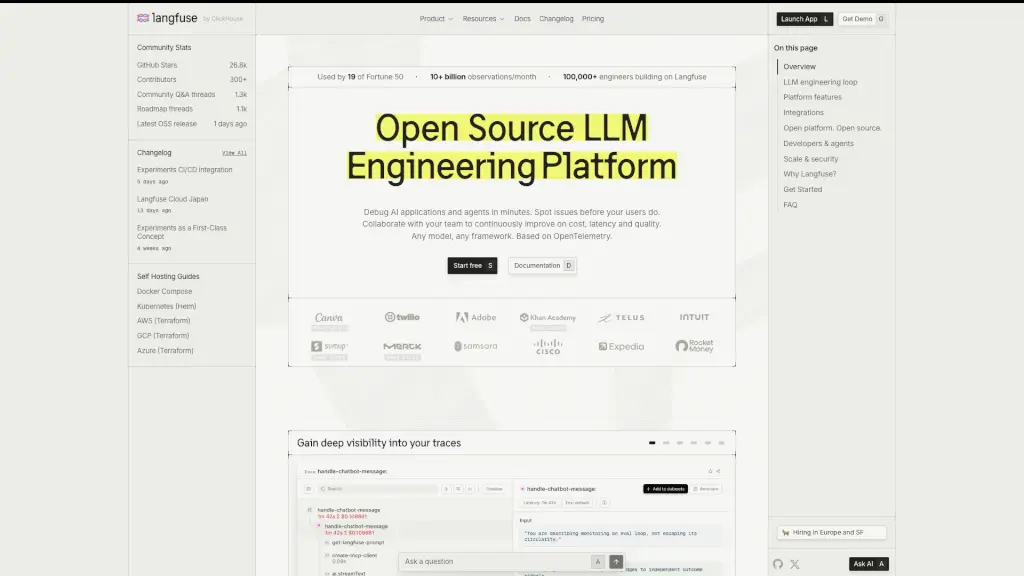

What is Langfuse?

Langfuse is an open-source LLM engineering platform designed to help developers build, debug, and improve AI applications—from early prototypes to high-scale production systems. It brings together tracing, prompt management, evaluations, and experiments in one unified workflow so teams can continuously optimize their LLM-powered products using real user data.

Unlike traditional observability tools that struggle with the large, complex traces of AI apps, Langfuse is built specifically for LLMs. It captures every step—model calls, tool use, retrieval—and makes it easy to spot issues like high latency, rising costs, or poor output quality before users do.

What are the features of Langfuse?

- Tracing: Capture hierarchical, OpenTelemetry-based traces of every LLM call, tool invocation, and retrieval step with rich input/output context.

- Prompt Management: Decouple prompts from code, deploy them with one click, roll back changes instantly, and collaborate with your team on improvements.

- Automated Evaluations: Run LLM-as-a-judge, custom heuristic functions, or human reviews to assess output quality at scale.

- Experiments: Test prompt or model variants side-by-side using structured test cases and compare results with metrics like cost, latency, and accuracy.

- Human Annotation: Create collaborative review workflows to label production data and build golden datasets for training and evals.

- Cost & Latency Monitoring: Track spending, response times, and quality trends with dashboards and automated alerts.

- Playground: Test and compare prompts or models directly on real production inputs without deploying code.

- Agent-Friendly Tooling: Includes CLI, MCP server, and AI skills so coding agents (like Cursor or Claude) can manage Langfuse via natural language.

What are the use cases of Langfuse?

- Debugging a failing RAG pipeline by inspecting retrieval quality and LLM responses in a single trace

- Reducing API costs by identifying inefficient prompt patterns across thousands of production requests

- Running A/B tests between two prompt versions to see which generates more accurate customer support replies

- Building a human-reviewed dataset of high-quality outputs to fine-tune a custom model

- Monitoring latency spikes after deploying a new agent workflow across user sessions

- Collaborating with product and ML teams to iteratively improve prompt performance using shared eval results

How to use Langfuse?

- Install the Langfuse SDK (Python, TypeScript, or other supported languages) and add tracing to your LLM calls in under 5 minutes.

- Wrap your prompts in Langfuse’s prompt registry to version, deploy, and manage them outside your codebase.

- Set up automated evaluators (e.g., factuality, tone, or custom logic) to score every production trace.

- Use the Langfuse Playground to experiment with new prompts on real user inputs before rolling them out.

- Invite teammates to review ambiguous or critical traces through the human annotation interface.

- Monitor dashboards for cost, latency, and quality trends—and set alerts for anomalies.